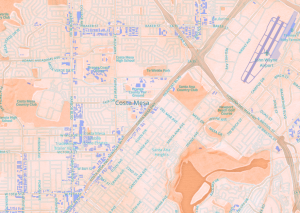

Jim Detwiler’s Introduction to Web Mapping outlines the basic history and understanding of web mapping. Detwiler begins by stating the advantages and disadvantages of both digital maps and paper maps. There is a lot of current debate about the value of digital maps – there seems to be a lost sense of adventure/exploring with the advent of digital maps. However, one clear advantage of digital maps is their relative low expense to produce compared to traditional maps. They are also easier to distribute to a larger audience. Because they are online, it is also so much easier to update them – no need to redraw, reprint, and redistribute. Digital maps also have the capability for interactivity.

However, this is not to discount the advantages of traditional maps. Digital maps require the Internet – and if you think about the nature of needing a map, you are most likely exploring somewhere you are unfamiliar with – will this location even have Internet? Not necessarily; therefore, digital maps are “vulnerable to problems of servers and networks going down” (Detwiler). This is where paper maps have the upper hand – they are actually much more reliable in this sense. Also, paper maps have far superior resolution (1200-3400 DPI). This is advantageous when you need to see the map very clearly (which is probably most of the time).

Reading through the web map categorization by Dutch cartographer Jan-Menno Kraak that Detwiler includes in his overview, I remembered an app that I read about. A London-based company POKE developed an app called Pints in the Sun, which helps users to find the nearest pub that’s out of the shade. The necessity for an app like this is distinctly British – but nonetheless interesting as an example that embodies multiple kinds of web maps. Users can find a pub in one of two ways – “searching for a specific spot, or just browsing the map to find one that you like the look of” (Dombrosky). The next step is to adjust the ‘sun timeline’ at the bottom of the map to indicate the time of day (which then projects shadows on the three-dimensional buildings).

Pints in the Sun could be classified as several different types of maps, including most obviously an Analytic Web Map and a Collaborative Web Map. For example, POKE developers used HTML5 geolocation and the FourSquare API (Application Programming Interface) “to locate a suitable list of pubs before loading building outline data from OpenStreetMap and rendering it in 3D using three.js (map projection conversion courtesy of the D3 library)” (www.pokelondon.com). Its use of OpenStreetMap classifies it as a collaborative map, in that it uses a “distributed network of people to create and maintain the map” (Detwiler). Pints in the Sun classifies as a Analytic Web Map in several ways, but most distinctly in its use of Solar Almanac Calculation through SunCalc (implemented in JavaScript).

Dombrosky, Pete. “Want to Find a Pub in the Sun? There’s an App for That.”Thrillist.

Thrillist.com, 12 June 2014. Web. 16 Nov. 2014.

Jim Detwiler, “Introduction to Web Mapping“

“Pints in the Sun: A Minimal Viable Side Project | POKE.” Pints in the Sun: A Minimal

Viable Side Project | POKE. N.p., 5 June 2014. Web. 16 Nov. 2014.