http://www.moma.org/interactives/exhibitions/2012/inventingabstraction/?page=home

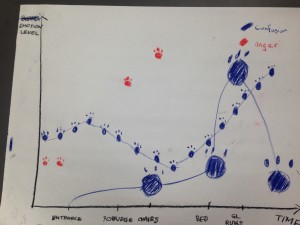

This JPEG is the static version of an interactive network diagram made for the website of MoMA’s Inventing Abstraction exhibition that ran from December 2012 to April 2013. It was made by Paul Ingram and Mitali Banerjee, a professor and doctoral candidate, respectively, from Columbia Business School, in collaboration with the curatorial and design team of the exhibition. “Vectors connect individuals whose acquaintance with one another…could be documented,” states the description on the website, i.e., their relationship was explicit. Each node represents an artist whose work was in the exhibition, and they are arranged more of less geographically. The names marked in red have 24 or more connections. The interactive version on the website serves as a navigational device; clicking on a name zooms in on that artist and their network of relationships. Simultaneously, the webpage presents thumbnails of artworks that are in the exhibit and a short biography for the more highly connected artists marked in red.

While this network diagram was not made for research purposes, it still raises issues that face the use of network analysis in the digital humanities. One of the dangers that Scott Weingart discusses in his blogpost “Demystifying Networks” is the reduction of data that is imposed by current limitations in network analysis algorithms. In order to keep the network manageable for software and sparse enough to visually comprehend, all of the possible relationships that can exist between artists are reduced to the vague concept of “acquaintance.” Unfortunately, the website never defines what they mean by that word. Did they meet in person? Did they carry on a correspondence? Did they work together or otherwise exchange artistic ideas? None of these different types of relationships are depicted in this diagram. Furthermore, the website does not explain why 24 connections was chosen as the criteria for an artist being marked in red. What does having at least that many connections signify, if anything? Does it mean that their ideas were more influential? That they were more extroverted? That they traveled more? MoMA have produced a very provocative data visualization, but it would have been more revealing if they had included a little bit more documentation.

That said, this diagram is interesting because it highlights some artists who may not be as well known as others. Three artists are marked in red that I would not have thought were highly connected: Sonia Delaunay-Terk, Natalia Goncharova, and Mikhail Larionov. The significance of these lesser known Russian and women artists is one of the many questions that this network diagram raises.